Products are grown, not built. Cultivate the practice.

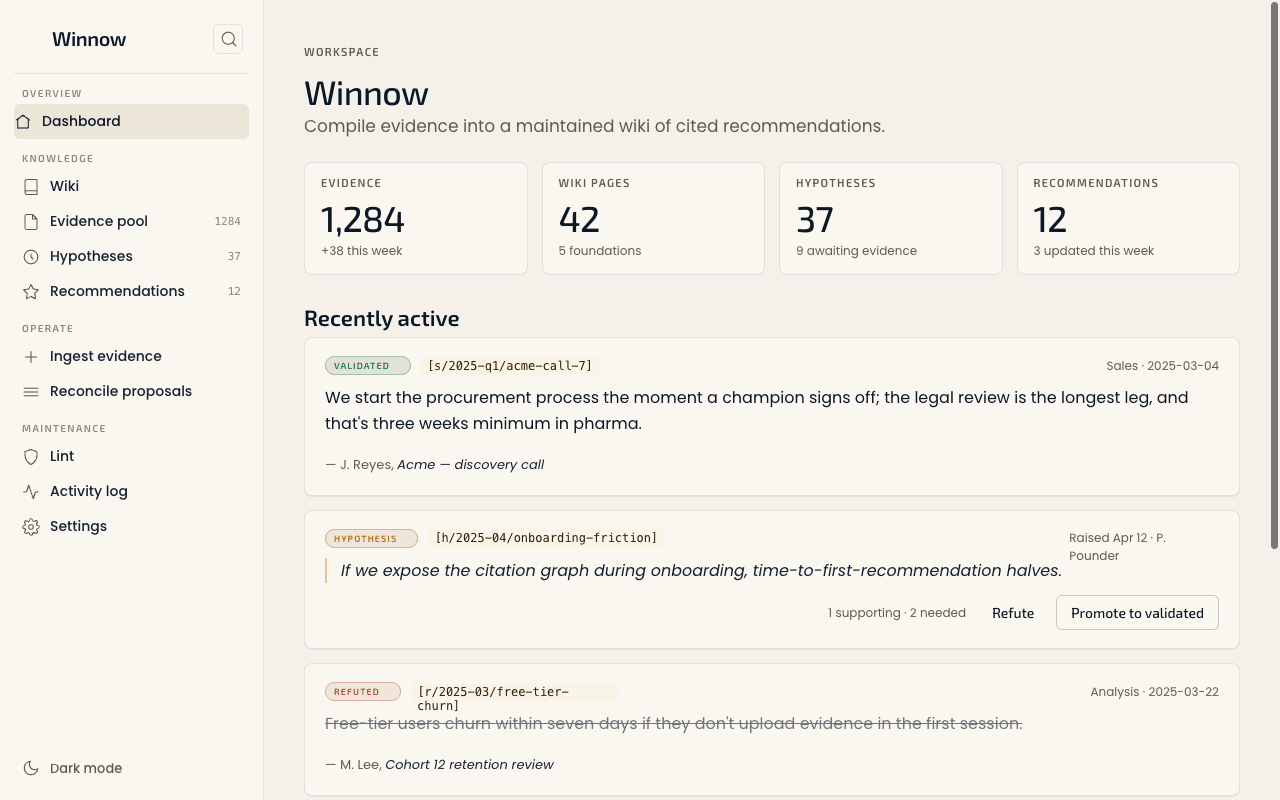

Sales notes in HubSpot, research in Notion, decisions in Linear, support tickets in Zendesk — every team holds part of the picture; nobody holds the whole. Cultivate is the methodology for growing products from evidence to launch. Winnow is the open-source tool that refines that evidence into cited recommendations; Bushel (forthcoming) measures the recommendations out into the artefacts a launch needs.

Prepare the soil.

Harvest the field.

Winnow the grain.

Most product teams operate in speculation but treat speculation as fact. Cultivate's contribution is making speculation visible, traceable, and resolvable across the whole growing cycle — foundations as soil, evidence as water, recommendations as the fruit. Three states. One source of truth. Every claim cited.

Drop in what you already write

Sales notes, research transcripts, decision memos, support tickets. Drag-and-drop a folder, point a webhook at it, or sync from Dropbox / Drive / OneDrive. Whatever you've got, in whatever format.

The wiki maintains itself

An LLM reads each piece of evidence, decides which pages should change, and proposes the edits. Reinforcements and contradictions with prior beliefs are surfaced — no silent rewrites. You review the diff before anything sticks.

Hand cited briefs to your editor

Recommendations are copyable, structured, and sourced. Hand them to Cursor, Claude Code, or your IDE — citations survive the paste, so the AI build stays grounded in the same evidence the team did.

Procurement adds three to four weeks to enterprise rollouts in regulated verticals.

If we expose the citation graph during onboarding, time-to-first- recommendation halves.

Free-tier users churn within seven days if they don't upload evidence in the first session.

Engineering-grade. Quietly opinionated.

A reading-room product, not a dashboard product. Calm where competitors are loud. Editorial where competitors are utilitarian. Self-host or run in the cloud — same source of truth either way.

Everything is sourced. Nothing is assumed.

Each recommendation is a structured brief: a position, the decisions it implies, and every cited source. Click any citation to read the original. Demote it back to hypothesis if the evidence turns thin. Export the whole brief as a paste-ready prompt.

Two tools. One methodology.

Winnow is the first tool — open source today, gathering and refining evidence into cited recommendations. Bushelis the second, in development: it takes Winnow's recommendations and measures them out into the audience-specific artefacts a launch needs — release notes, in-app copy, support articles, sales enablement. Both sit inside the Cultivate methodology; neither replaces it.

A note on the data question — where evidence comes from, where it goes, and what stays first-party — lives at where the data comes from, where it goes.

Built for product people who write things down.

- Senior product managers compiling evidence across squads.

- Heads of product running quarterly strategy reviews.

- Founders codifying what the team already believes — and what they don't.

- Engineering managers turning research into prioritised, sourced tickets.

Want to see what this looks like in your role specifically? How Cultivate fits product teams →

Run Winnow on your own infrastructure.

A single command to install. Your wiki, your evidence, your model. The cloud version is identical to self-hosted — the only difference is who runs the database.

# install $ npm i -g winnow # initialise a workspace $ winnow init ./my-product ✓ evidence pool (0 records) ✓ wiki (5 foundation pages) ✓ recommendations (0 briefs) # compile a recommendation $ winnow compile "onboarding friction" validated [s/2025-q1/acme-call-7] hypothesis [h/2025-04/onboarding-friction] refuted [r/2025-03/free-tier-churn] → wrote recommendations/onboarding-friction.md

CLI, plugin, web — same source of truth.

Use Winnow from your terminal, from a Discord agent, from n8n, or from the web app. The wiki and citations are the contract; the surface is up to you.